Azure OpenAI Service safeguards your AI projects with built-in content filtering. This guide will explain how to configure these filters using Azure OpenAI Studio (preview).

How I Found Out About Azure’s Content Filters

It all started when I noticed something odd with the AI responses on my site, Paperguide (previously ChatWithPDF.AI) they just didn't match up with what I was used to seeing from OpenAI. Curious to find out why, I dug into Azure's settings to see what might be causing the difference. That's when I stumbled across something called content filters. These filters were checking and rating everything that went in and out of the AI, trying to block anything they thought was too risky. Discovering these filters was a lightbulb moment for me. It explained the discrepancies and pushed me to learn how to tweak them to get the results I needed.

What are Content Filters?

Azure OpenAI Service utilizes content filters to identify and potentially prevent the generation of harmful content across four categories: violence, hate speech, sexual content, and self-harm. The filtering system analyzes both user prompts and model completions, categorizing content severity on a scale of safe, low, medium, and high.

Default Settings

By default, content filtering is enabled and set to a medium severity threshold for all categories in both prompts and completions. This means content classified as medium or high severity is filtered, while content flagged as low or safe is permitted.

Customizing Filters

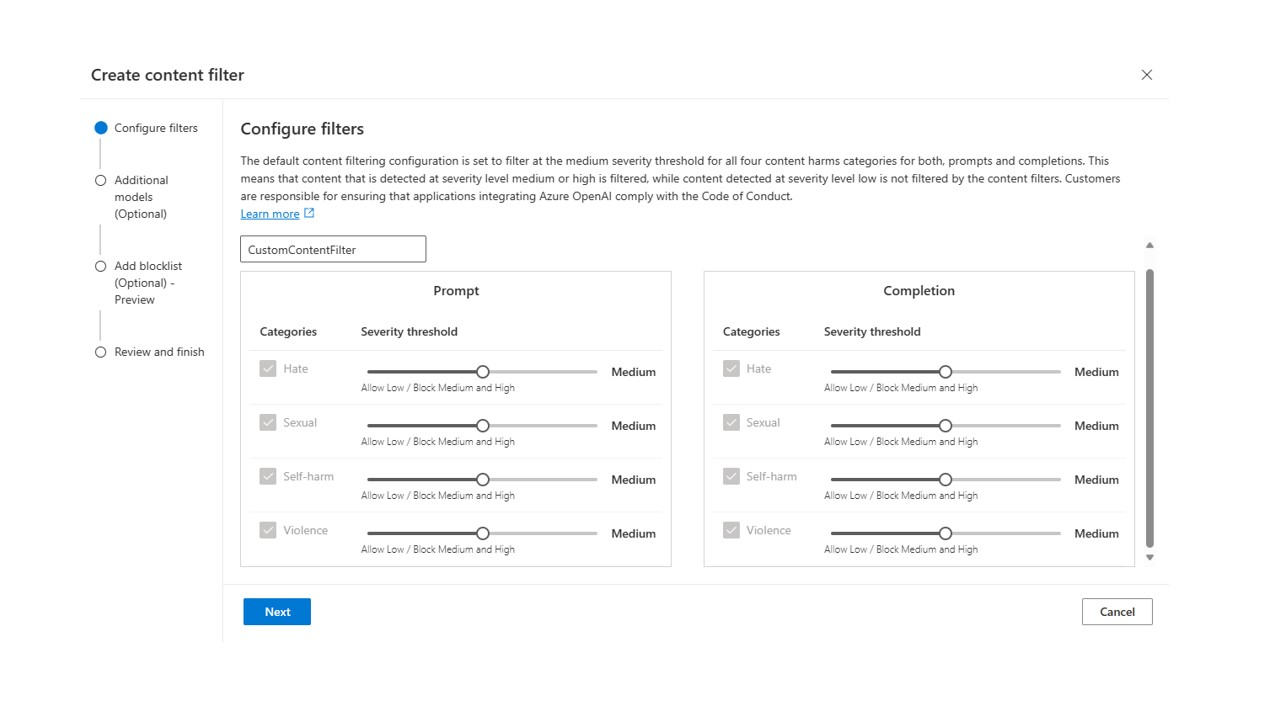

Azure OpenAI Studio allows you to create customized content filtering configurations for your resources. These configurations enable you to adjust filtering levels independently for prompts and completions within each content category. There are three configurable severity levels: low, medium, and high.

Turning Off Filters (Limited Access Required)

In specific scenarios, you might require full control over content filtering, including partially or entirely disabling filters. However, approval is mandatory for such adjustments. Managed customers can apply for full control through the "Azure OpenAI Limited Access Review: Modified Content Filters" form.

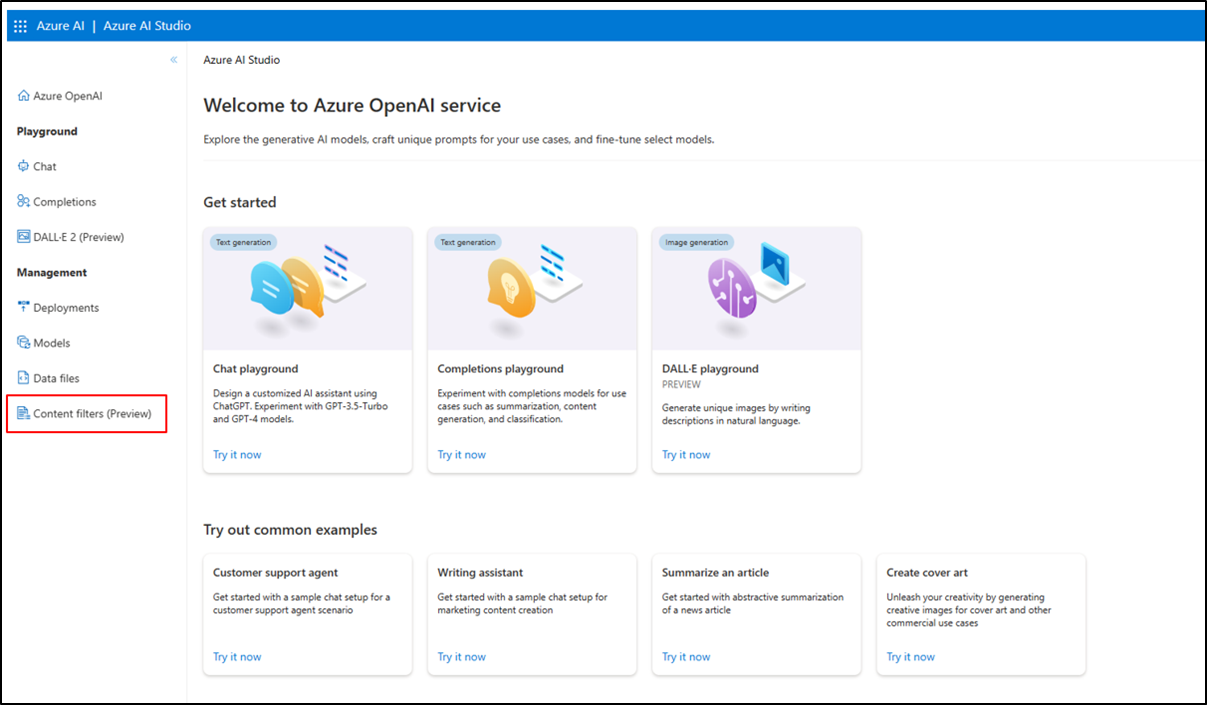

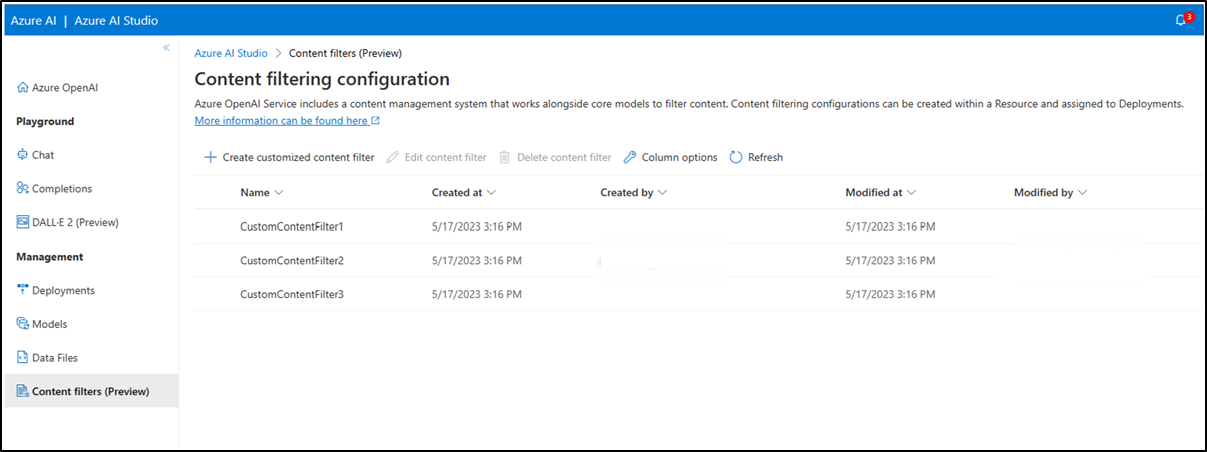

Configuring Filters in Azure OpenAI Studio

- Access Azure

OpenAI Studio and navigate to the "Content Filters" tab.

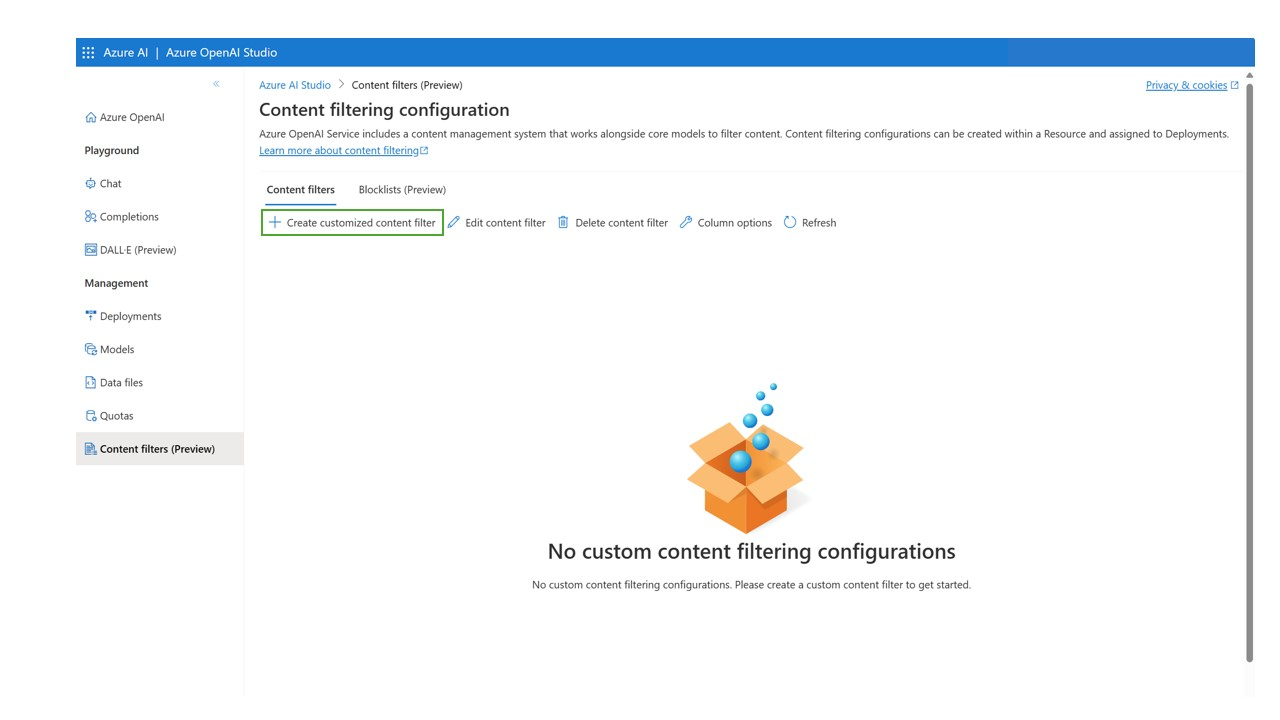

- Create a new content filtering configuration and assign a name.

- The configuration view displays the default settings (medium and high severity filtering for all categories in prompts and completions). You can modify the severity level for each content category, independently for prompts and completions, using the provided sliders.For stricter filtering, adjust the sliders to a lower severity level (e.g., filtering low-severity content for specific categories in prompts).

- Approved users can entirely disable filtering for specific categories or turn off all filters and annotations.

- Create multiple configurations as needed for various use cases.

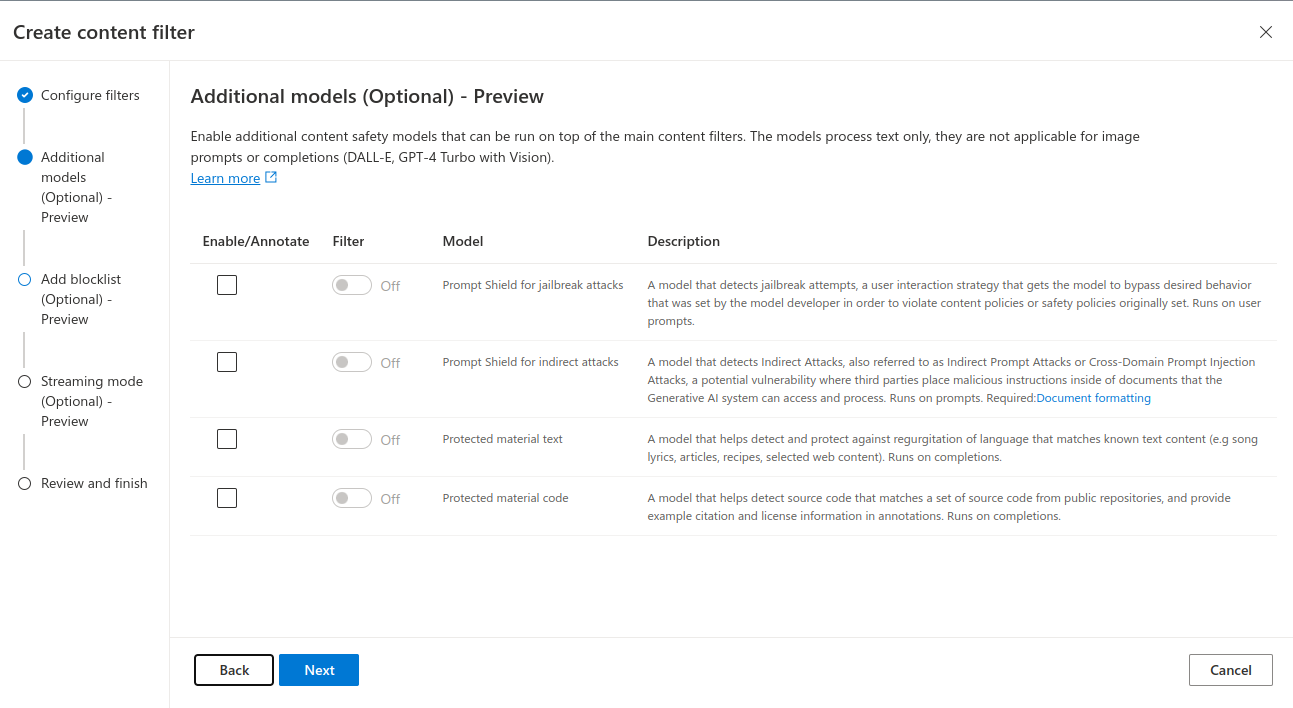

- To enable optional models (jailbreak risk detection, protected material), select the respective checkbox. Choose between "Annotate" (identifies content but doesn't filter) or "Filter" (removes flagged content).

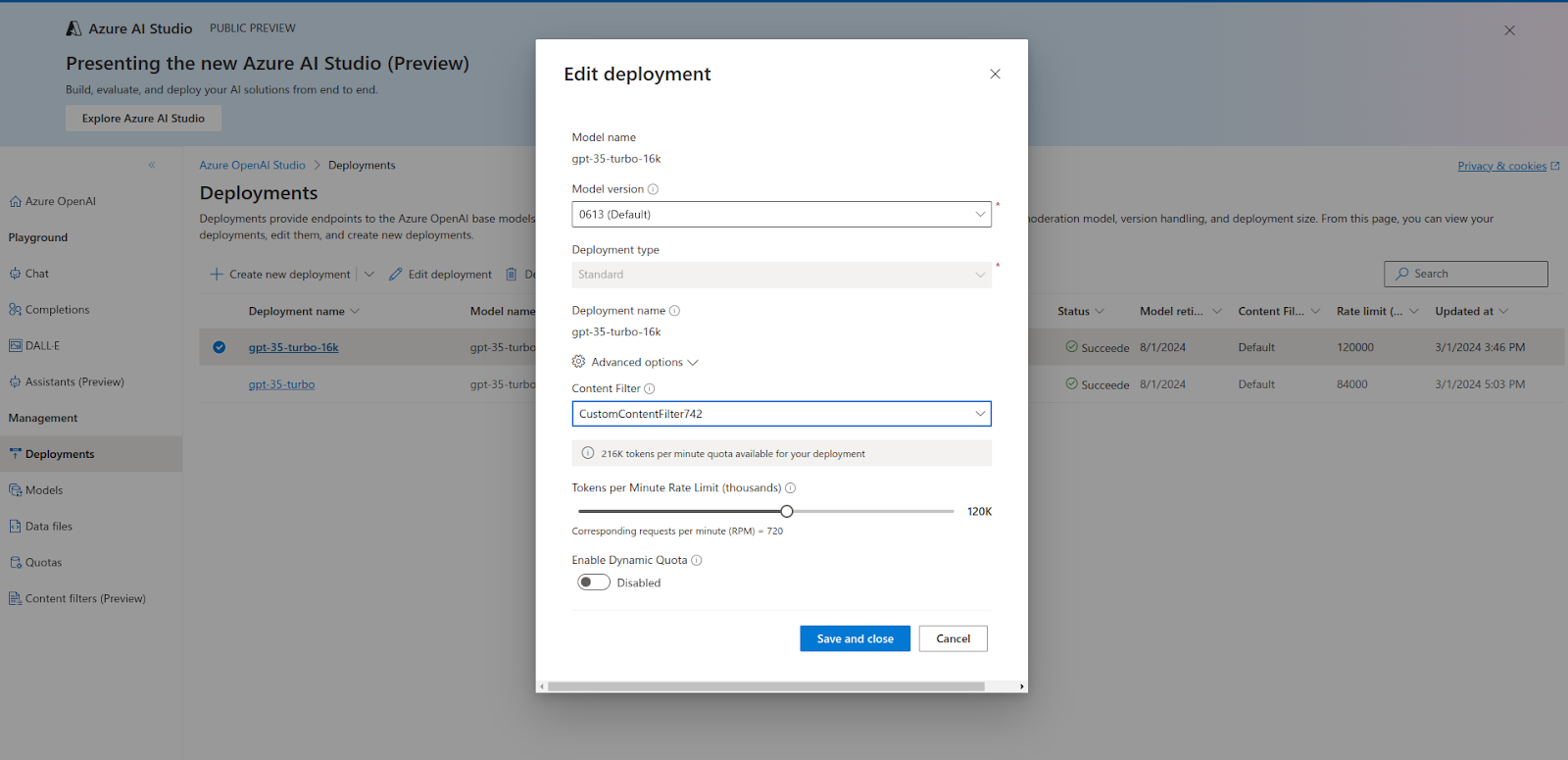

- Associate your content filtering configuration with one or more deployments. Navigate to the "Deployments" tab, select "Edit deployment," and choose the desired configuration from the "Content Filter" dropdown menu. Click "Save and close" to confirm.

-

The "Content Filters" tab allows you to edit or delete configurations (one at a time). Remember to unassign a configuration from deployments before deletion.

By effectively configuring content filters, you can ensure the responsible and ethical use of Azure OpenAI Service in your projects. For a detailed guide on how to manage and adjust content filters in Azure OpenAI Service, visit the official Microsoft documentation